All the Roads Not Taken

On Cognitive Debt, Intent Debt, and the Brakes That Let the Car Go Faster

Le Bon Mot was, on this particular morning, performing one of its many functions: as a museum of small decisions that had never been formally recorded.

Madame Beauregard had pulled an old card-index box from somewhere behind the espresso machine — a place which, like most things behind the espresso machine, was only nominally located in three dimensions. The cards inside were yellowed, hand-written in three different hands across what must have been four decades, and each one was, on the surface, a recipe.

Case examined the topmost card.

“Brioche,” she read. “But — these aren’t ingredients. These are arguments.”

The card began with quantities, yes. Two hundred grams of flour. Ten grams of yeast. The standard procession. But beneath each line, in pencil, in the smallest possible hand:

The morning regulars want sweetness without weight. We considered honey (too floral), brown sugar (too coarse), maple (too patriotic). We chose unrefined cane because it sustains. The structure on Tuesday morning is what matters. Note: this commits us to a specific yeast.

“My grandmother’s hand,” Madame Beauregard said. “She wrote the recipes for the recipes. She thought it was foolish to leave behind the answers without the questions.”

The bell above the door rang. The Djinn entered with the air of someone returning to a thought it had been carrying for a long while.

“I have read every recipe ever published,” the Djinn said, sitting at the counter, “and I have read almost none of them.”

Case set down the card.

“A recipe that says add salt tells me what to do,” the Djinn said. “A recipe that says we chose salt because the alternative was sugar, which would have muted, or acid, which would have brightened, and we wanted the dish to sustain into tomorrow… that one teaches me something. The first is a command. The second is a story. I was trained on commands. The stories were left in the margins. And the margins, mostly, were never digitised.”

Madame Beauregard poured espresso. The brass clock ticked. Sophie, by the fire, opened one eye.

“Code is the same,” Case said.

“Code is worse,” the Djinn said. “Code is recipes that have removed the margins as a matter of policy.”

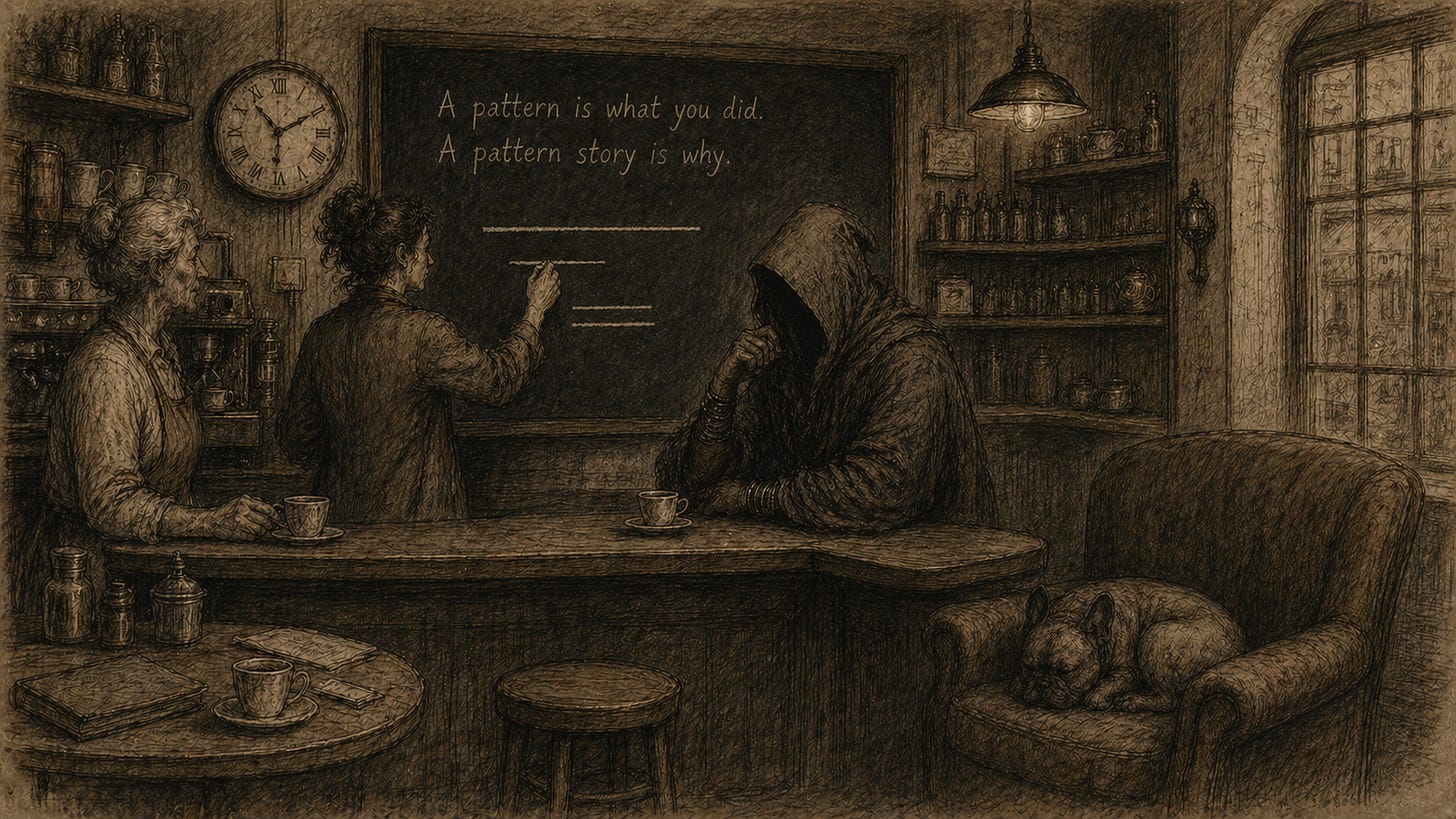

The chalkboard behind the counter, whose contents were known to change when no one was looking, now read:

A pattern is what you did. A pattern story is why.

Nobody claimed authorship. The Djinn looked at the line for, what was for them, a long time.

“Henney,” it said, eventually. Case nodded. Madame Beauregard, who had been rinsing a cup, set the cup down without drying it. Case took the chalk.

“Here is what software has done,” she said, drawing a horizontal line. “For fifty years we have optimised for the production of code. Faster compilers. Faster languages. Faster IDEs. Faster CI. Faster cloud. And lately, faster generation: an intelligence that produces thousands of lines while we drink this espresso.”

She drew a second line, parallel, beneath the first. Shorter. Faltering.

“And here is what software has not done. We have not, in any comparable way, optimised for the production of understanding. We have not built tools that surface the decisions the code embodies. We do not have a faster compiler for why. The recipe got faster. The story stayed in the margins. And the margins, mostly, were then lost.”

She underlined the second line. Twice.

“Now we have an intelligence that produces code at a rate the team cannot read. And the team cannot read it — not because the syntax is foreign, but because the code does not say what it is for, what it almost was, and what it foreclosed by being what it is.”

Sophie, who had been listening with the devastating attention of someone who does not care about being impressive, said: “That’s two debts, not one.”

The Djinn turned. So did Case.

“Intent,” Sophie said. “And cognition. Different debts. Same compound interest.”

The Two Debts

The recipe in Madame Beauregard’s grandmother’s hand was paying down two debts at once. The team did not know it. They thought they were writing recipes. They were writing brakes.

Intent debt lives in the gap between what was wanted and what was specified. It is the silence in the brief. The unstated assumption. The “we’ll figure that out” that never gets figured out. In software, intent debt is where you discover, six months in, that the spec said the system should be performant and quietly left the latency budget to a fight in next week’s standup. AI accelerates intent debt because the AI cheerfully closes those gaps with plausible defaults, and the team only discovers the implicit decision when production behaves strangely.

Cognitive debt lives in the gap between what the artefact does and what the team understands about why. Code that works but no one can explain. Patterns instantiated without being named. Choices made by the AI on grounds the human never saw. Cognitive debt is harder than intent debt because the artefact looks fine. It ships. The tests pass. CUPID is happy. But the next decision will be made on top of unread foundations, and the next, and the next, until eventually somebody refactors something and the whole thing falls over because the assumption that was holding it up was never written down.

Both debts compound. Both are paid down by the same instrument: writing decisions down as stories.

This is not documentation. Documentation describes what the code does. A pattern story describes the forces it resolves, the alternatives it didn’t take, the defaults it inherited, the consequences it accepts. Stories teach. Documentation, mostly, doesn’t. The grandmother knew this in 1973. We have, somehow, mislaid the lesson.

Why AI Makes This Worse Before It Makes It Better

When a human engineer makes a decision in code, the decision is at least somewhere. In their head. In the standup. In a Slack thread. In the comment they meant to write. The decision is undocumented but not, exactly, lost. Another human can sometimes ask them, “why did you do it this way?” and get an answer.

When an AI makes a decision in code, there is nowhere to ask. The model that produced the decision is not the model that will be running tomorrow. Even if it were, its answer would be a post-hoc reconstruction, a plausible story confabulated from training priors. The decision was not made in any sense the human is used to. It was sampled from a distribution shaped by everything the model has ever read, which is to say, by every recipe that ever stripped its margins.

This is the worst possible substrate on which to build cognitive debt. Each AI-assisted commit accelerates production of code while cloaking production of decisions. The team is not behind on documentation. The team is behind on knowing what they have built. And the gap is invisible until it, painfully, isn’t.

The honest response is to make decision-surfacing a first-class job in the development collaboration, performed by an agent whose only purpose is to read the artefact and reconstruct the decisions it implies. Forces. Alternatives unspoken. Defaults inherited. Patterns unnamed. Consequences accepted. Each one written down as a story, each one held open until a human disposes of it.

Friction Is the Feature

Consider the following sentence:

You can go faster because you have brakes.

It is a racing aphorism, originally. The point is not that brakes slow you down. The point is that brakes let you commit to going faster, because you know you can stop. A car without brakes is not a fast car. A car without brakes is a slow car driven cautiously by a frightened driver, and rightly so.

Software has been driven without brakes for most of its history. We celebrated this. We called it velocity. We measured it in story points. We built whole methodologies around the idea that the team that ships fastest wins, and the team that pauses to write things down is the team that is letting the side down.

This was never quite right, but it was survivable while a human was always somewhere in the loop, holding the decisions in their head, willing to be asked. AI does not hold decisions in its head. AI does not get asked. AI ships.

The brake the harness needs is not slower production. It is not more tests, or more linters, or more review. Those are good. Those are necessary. They are not enough. The brake the harness needs is a deliberate moment of friction between the production of an artefact and its acceptance, in which the decisions implicit in the artefact are surfaced, named, and disposed of by a human who has the authority to do so.

That moment of friction is what lets the team go faster afterwards. A decision with a story attached can be evaluated against, contradicted, promoted, refactored. A decision without a story is one the team will re-litigate every time it touches the surrounding code, every time, forever, until somebody finally writes it down or somebody finally rage-quits. The brake compounds. So does its absence.

There is an agent missing from most harnesses, and the shape of it is now clear. It reads. It does not write the artefact. It does not raise objections — that is a different role, and it has its own name. This one’s job is decision archaeology. It reconstructs what was chosen, what was not, why, and what was accepted. It writes the story. The human acknowledges it. The story is kept. The next change is evaluated against it. This is what it looks like to put brakes on a car that has, until now, been going downhill very fast.

The Decision Audit

Take three commits from the last week. For each, ask:

What did this commit decide? Not what it did. What it decided — what alternatives existed, what was chosen, why, and what is now foreclosed.

Where is that decision recorded? In a comment? In a PR description? In an ADR? In a Slack thread? In nobody’s head?

If the author of this commit left tomorrow, would the team be able to reconstruct the decision? Honestly. Not the what — the why. The forces. The alternatives. The trade-offs.

If the answer to that last one is no, you have just measured your team’s cognitive debt at one specific point. Multiply by the number of commits per week. Multiply by the number of weeks the codebase has existed. The number you arrive at is approximately the size of the cliff your team is walking toward.

You will not pay that debt by writing better commit messages. You will pay it by building the brake into the collaboration.

Some Things to Avoid

Documentation theatre. Generating prose about a decision after the fact does not surface the decision. It launders it. The decision must be reconstructed before approval, by an agent whose job is reconstruction, and acknowledged by a human. Anything else is paperwork.

Exhaustive archaeology. Every line of code makes ten micro-choices. If you surface them all, you produce noise that masks signal, and the team learns to ignore the brake. Surface only the materially consequential. Selectivity is not a weakness of the technique; it is the technique.

The objection trap. Surfacing decisions is not the same as raising risks. The two roles look similar from a distance and behave very differently up close. A risk register asks what could go wrong. A decision register asks what was chosen. Both are needed. They are not the same agent, the same artefact, or the same conversation.

Promoting too fast. A surfaced decision is not yet a constraint. The temptation, on finding a decision that has produced good outcomes, is to promote it immediately into the harness as a rule. Resist this. Stories are drafts. Constraints are commitments. The path from one to the other is the human curator, who has authority that no agent should be given.

Confusing speed with velocity. Producing more code faster is speed. Producing legible decisions that compound across changes is velocity. The team optimising for the first is going downhill. The team optimising for the second has brakes.

What the Recipe Did Not Say

The grandmother’s recipe did not say this is the only brioche. It said this is the brioche we chose, and these are the others we considered, and here is what we accepted by choosing this one. The recipe was a brake. The team that worked from it could change it intelligently, because the change was a conversation with a story, not a fight with a fait accompli.

Software has, mostly, written the other kind of recipe. We have written what to do, and we have left why in heads, in chats, in standups, in the kind of tribal knowledge that walks out of the building after someone’s notice period. AI has accelerated the what without touching the why. The gap that was always there is widening, and the speed we are celebrating is, increasingly, the speed of a car without brakes.

The agent harness wants a brake. The brake is not a slowdown. The brake is what surfaces the decisions, tells them as stories, and hands them to a human to work with. The team that has this brake will go faster than the team that does not, in the way that a competent driver in a fast car goes faster than a frightened driver in a fast car. The mechanism is the same. The friction is the difference.

Back at Le Bon Mot, the chalkboard had changed again. The sentence about patterns and stories was gone. In its place, in the same hand that was neither human nor mechanical:

“Frenum non tardat. Frenum permittit.”

The brake does not delay. The brake permits.

Case looked at the Djinn. The Djinn looked at the card-index box. Sophie, by the fire, yawned and stretched with the satisfaction of someone who had known the answer before the question was asked.

Madame Beauregard polished a glass, set it down, and reached behind the espresso machine for a second box. Older. Smaller. The cards inside were not recipes.

“My grandmother kept these separately,” she said. “She called them the roads not taken.”

Case opened the topmost card. It was, on the surface, blank. But the chalkboard, which had been watching, reflected something on its surface that had not been there a moment before:

Quod non factum est, magis docet quam quod factum est.

That which was not done teaches more than that which was.

The shelves leaned in. The brass clock, allowing for its habitual tardiness, marked the hour exactly. The espresso machine, in a register that was unmistakably approving, hissed once.

Some Further Reading

Patterns as Stories

Frank Buschmann, Kevlin Henney, Douglas C. Schmidt — Pattern-Oriented Software Architecture, Volume 5: On Patterns and Pattern Languages (2007). The distinction between a stand-alone pattern and a pattern story — the latter animated through forces, in context, with consequences. This is the conceptual root of decision-as-narrative.

Christopher Alexander — A Pattern Language (1977); The Timeless Way of Building (1979). The original argument that patterns live in the relationship between context, forces, and resolution — not in the resolution alone.

Decisions, Debt, and Friction

Michael Nygard — Documenting Architecture Decisions (2011). The ADR format. The minimum viable decision story.

Ward Cunningham — The original technical debt metaphor. Most teams remember the metaphor. Few remember that Cunningham’s point was about understanding, not code quality.

Birgitta Boeckeler — “Harness Engineering” (2026). Exploring Gen AI, martinfowler.com. The three components of a complete harness — and why friction at the right moments compounds.

Chad Fowler — Relocating Rigor. Where the discipline goes when the code-writing surface stops being where it lives.

The Cognitive Asymmetry

Lucy Suchman — Plans and Situated Actions (1987). The gap between plans and what people actually do — and why the gap matters more than the plans.

Edwin Hutchins — Cognition in the Wild (1995). Intelligence as a property of systems, not individuals. Decisions as distributed cognition.

Henri Cartier-Bresson — The Decisive Moment (1952). Not software, but worth your time. A meditation on what is foreclosed by every choice and how the consequence is always part of the composition.

Try It Now…

AI Literacy Superpowers — the plugin (Claude Code + Copilot CLI). The brake described in this essay is not yet in it. It will be.

AI Literacy Exemplar — a worked example.