The Myths We Measure By

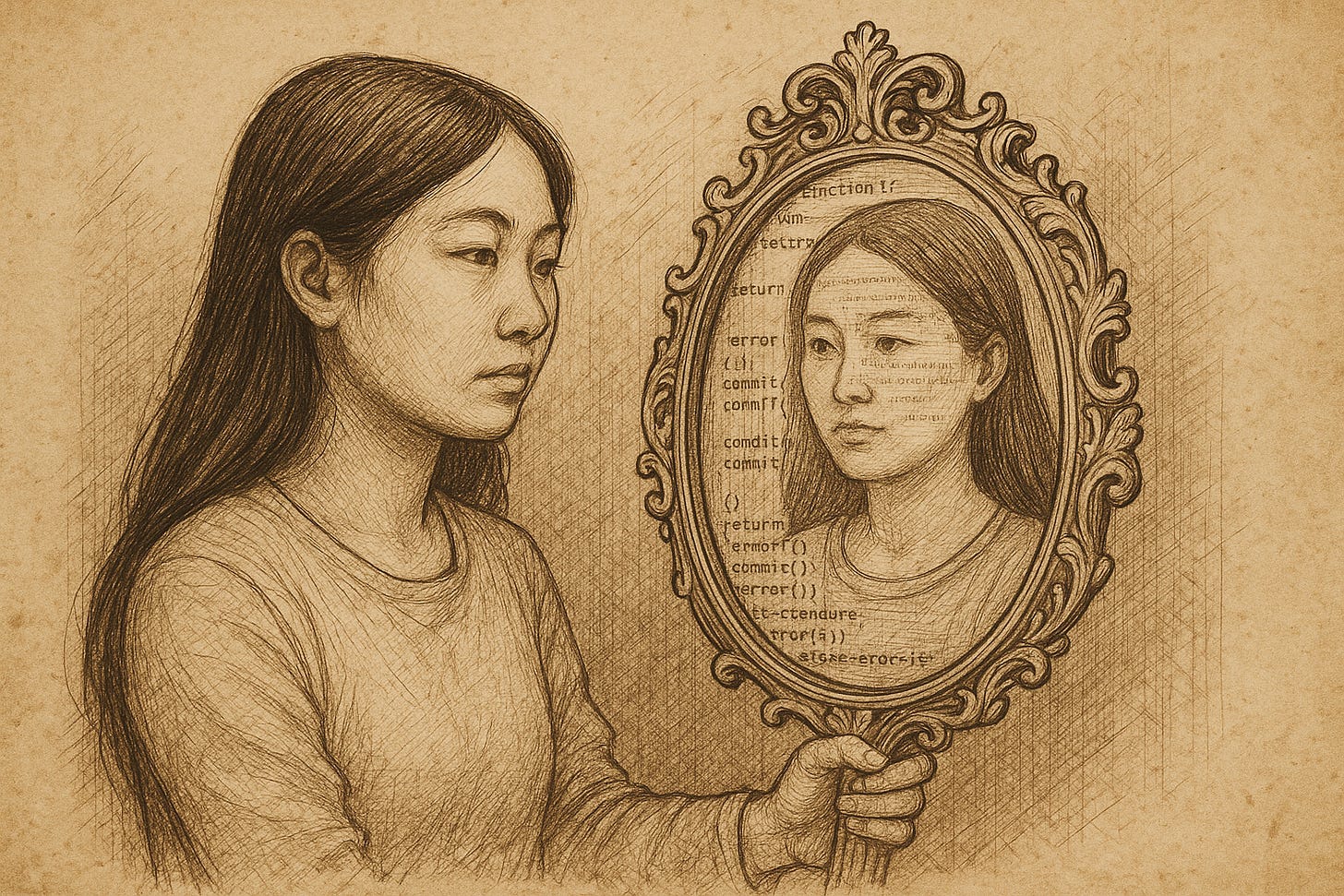

Metrics are best as contextual mirrors accompanied by so much more

In software development, as in so much of life generally, we live by myths. This is no bad thing. Myths are useful tools to help us grapple with the unknown, the confusing, the too-complex to grapple with.

Myths can be useful, benevolent and ultimately valuable. They can also be problematic. Just like metrics, which are, also, myths.

A Company of Fools…

A team gathers for their weekly DORA review. Targets are red; tension rises. A lead developer explains a spike in recovery time, but the manager waves him off — “We just need to get the number down.”

Soon, they release less often, not more. Incidents are hidden, not discussed. Everyone is busy optimising for optics, not outcomes. The mirror has been replaced by a management dashboard, and no one’s looking at themselves anymore.

… and The Mirror Room

In another team a developer stands before a wall of dashboards glowing blue in the dark. Each tile tells a story: deployments per day, lead time trends, error rates, recovery curves. She feels pride, and unease. The numbers look good — too good.

She leans closer. The reflection looking back isn’t her team, but their idealised selves — tidy, efficient, compliant. The dashboards are mirrors that have stopped showing reality; they’ve become portraits. She turns away and opens an incident doc instead — the real mirror, messy with human voices.

Only there, in the unvarnished reflections of failure, does she see the truth.

There’s a quiet superstition at the heart of modern software engineering: the belief that if we can measure something, we can control it. That if we can quantify our delivery process — in neat, comparable numbers — we can tame the messy, human business of building systems that never quite behave entirely as expected.

The DORA metrics — deployment frequency, lead time for changes, change failure rate, mean time to recovery — are our most widely worshipped measures. They adorn dashboards, OKRs, quarterly reports, and executive decks. They give the appearance of objectivity, the comforting illusion of progress, the sense that we are managing something real and measurable rather than fragile, creative, socio-technical collaboration.

But beneath their glow lies something older, and perhaps more profound.

Metrics, when used wisely, are not truths — they are myths.

They are stories we tell ourselves about what matters. They are rituals that help us look inward and reflect on how we are behaving, not commandments carved in granite.

A metric is a mirror. It reflects not only what is but what we choose to see. A good metric reveals; a bad one distorts. And even the good ones become dangerous when they stop being mirrors and start being dictates — when they shift from being tools for reflection to instruments of judgment.

The DORA metrics endure because they appeal to four deep-seated and important myths about what good engineering feels like:

Flow — that motion equals health.

Responsiveness — that speed equals learning.

Mastery — that fewer failures mean more control.

Resilience — that recovery defines continuity.

Each of these myths contains a benevolent truth. Together, they form a constellation — a set of guiding stars rather than a map. When treated as mirrors, they help us see the residual value in our systems, the living patterns of improvement and decay. When treated as comparative dictates, they narrow our vision until all that matters is the number, not the story it tells.

So, let’s reimagine the DORA metrics as benevolent myths — not as KPIs, but as useful and valuable parables of modern engineering life.

Four Benevolent Myths

The Myth of Flow – Deployment Frequency

“If we deploy more often, we are healthier and freer.”

This myth invites us to make change normal — to reduce fear and friction until shipping becomes as natural as breathing. Its truth is in habitual courage. Its shadow is in mistaking movement for meaning.

The Myth of Responsiveness – Lead Time for Changes

“If we move from idea to production quickly, we are adaptive.”

This myth honours feedback — the rhythm of learning through doing. Its truth is in shortening the gap between intention and insight. Its shadow is in mistaking haste for understanding.

The Myth of Mastery – Change Failure Rate

“If fewer of our changes fail, we are disciplined and capable.”

This myth soothes our longing for control. Its truth is in respect for complexity. Its shadow is in fear of experimentation — when we start to believe that safety means rarely being surprised.

The Myth of Resilience – Mean Time to Recovery (MTTR)

“If we recover quickly, we are resilient.”

This myth celebrates recovery over perfection. Its truth is in grace under failure. Its shadow is complacency — the romance of chaos as a badge of toughness rather than a teacher of design.

Some Practices

Treat every metric as a conversation starter, not a verdict.

Pair DORA metrics with qualitative stories — retros, incident reviews, developer sentiment surveys.

Focus on trends, not targets; on learning velocity, not numeric purity.

Rotate ownership: let teams choose which myth to study this quarter.

Hold quarterly “Mirror Sessions” where teams reflect on what their metrics reveal and conceal.

Some things to avoid

Measuring without meaning — confusing correlation for causation.

Weaponising metrics — using them to judge rather than to learn.

Worshipping data without reflection — mistaking the mirror for the world.

Metrics, at their best, are moments of self-awareness. They remind us that we control not the events themselves but our responses to them. They are not commandments but companions — mirrors held up to our craft, our teams, and our culture.

The wise engineer knows that good systems, like good lives, are not measured by perfection, but by the honesty of their reflection. We do not worship the number; we study the mirror.

Further Reading

Nicole Forsgren, Jez Humble & Gene Kim – Accelerate

Donald Schön – The Reflective Practitioner